DataDog's Build Binary Size Reduction: Uncovering Methods, Challenges, and Implications

Introduction: The DataDog Binary Size Reduction Mystery When DataDog announced a 77% reduction in their build binary size, the software engineering community took notice. Such a dramatic shrink isn...

Source: DEV Community

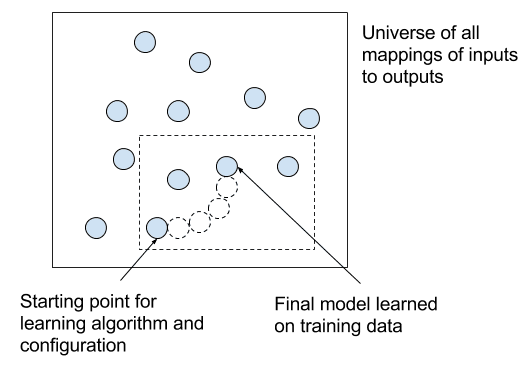

Introduction: The DataDog Binary Size Reduction Mystery When DataDog announced a 77% reduction in their build binary size, the software engineering community took notice. Such a dramatic shrink isn’t just a number—it’s a seismic shift in resource efficiency, deployment speed, and operational cost. But here’s the catch: DataDog’s blog post, while impressive, left out the how. No detailed breakdown of the methods, no discussion of tradeoffs, no insights into the iterative failures that inevitably precede such a breakthrough. This omission creates a critical knowledge gap for an industry desperate to replicate similar gains. Without understanding the mechanics behind this achievement, developers risk either over-optimizing into instability or under-optimizing due to fear of breaking production systems. To unravel this mystery, we must dissect the likely mechanisms at play. DataDog’s reduction wasn’t achieved through a single silver bullet but rather a synergistic combination of techniques

![[Exam Report] Datadog Fundamentals — A Modern Learning Approach Leveraging AI (NotebookLM & Antigravity)](https://media2.dev.to/dynamic/image/width=1200,height=627,fit=cover,gravity=auto,format=auto/https%3A%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fuploads%2Farticles%2F7quhnui1qof3brj75dgy.png)